Loading columns from staged data files using a COPY INTO statement:Īs file format options specified directly in the COPY INTO statement.Īs file format options specified for a named file format or stage object. The named file format/stage object can then be referenced in the SELECT statement. Querying staged data files using a SELECT statement:Īs file format options specified for a named file format or stage object. To explicitly specify file format options, set them in one of the following ways: Specify the format type and options that match your data files. To transform JSON data during a load operation, you must structure the data files in NDJSON (“Newline delimited JSON”) standard format otherwise, you might encounter the following error:Įrror parsing JSON: more than one document in the input All other file format types : There is no requirement for your data files to have the same number and ordering of columns as your target table. When querying staged data files, the ERROR_ON_COLUMN_COUNT_MISMATCH option is ignored. If the source data is in another format, specify the file format type and options. The default record delimiter is the new line character. The default field delimiter is a comma character ( ,). The default format is character-delimited UTF-8 text. To parse a staged data file, it is necessary to describe its file format: CSV :

The following file format types are supported for COPY transformations: This section provides usage information for transforming staged data files during a load. The ENFORCE_LENGTH | TRUNCATECOLUMNS option, which can truncate text strings that exceed the target column length.įor general information about querying staged data files, see Querying Data in Staged Files. This feature applies to both bulk loading and Snowpipe.Ĭolumn reordering, column omission, and casts using a SELECT statement. This feature helps you avoid the use of temporary tables to store pre-transformed data when reordering columns during a data load.

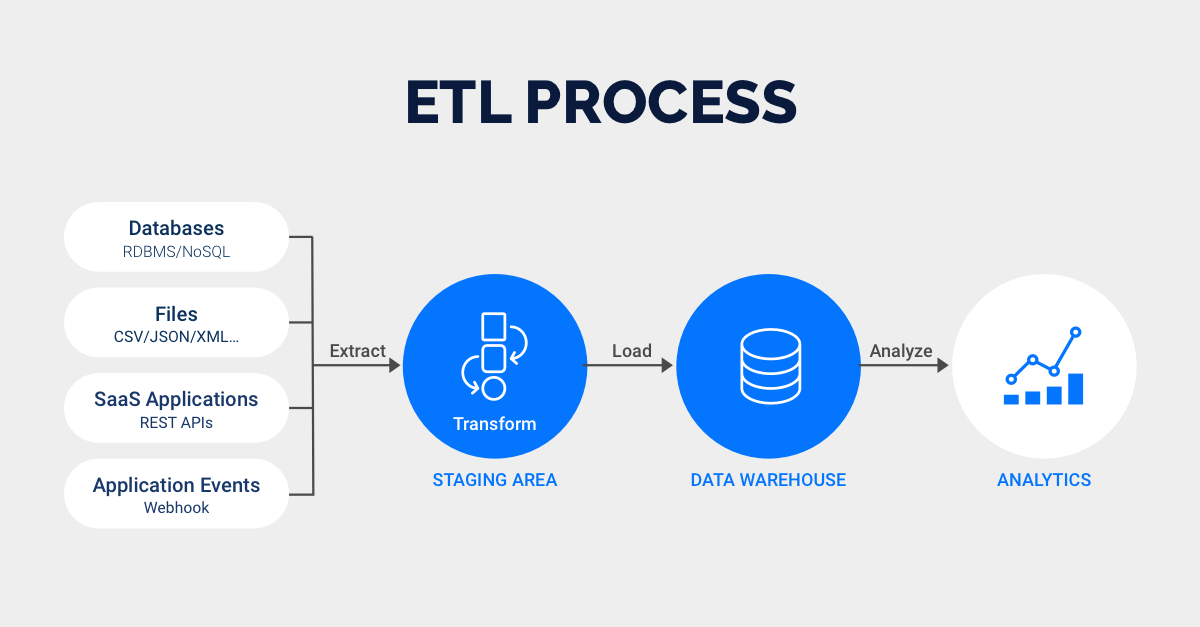

Snowflake supports transforming data while loading it into a table using the COPY INTO command, dramatically simplifying your ETL pipeline for basic transformations. Guides Data Loading Transforming Data During Load Transforming data during a load ¶

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed